Machine

Learning

You wake up in the morning and ask your virtual personal assistant to brief you on your schedule for the day. Sometime later you leave the house to meet a new client and enter his/her address into your car’s GPS navigation service. Later you check your e-mails, confident that they will have been filtered for any spam. Your social media accounts suggest “people you may know” and you keep getting pop-up ads for holidays since you booked a hotel for a weekend break recently. In the evening you sit down to watch a film which you select from the recommendations your streaming service has provided.

You may not realize this, but none of this would have been possible without the assistance of Machine Learning techniques.

What exactly is Machine Learning?

Machine Learning is a branch of Artificial Intelligence which basically enables computers to learn from data, i.e. identify patterns and then apply what they have learnt without any human intervention and continue to improve upon it. Most of us are not even aware of how pervasive it has become and how it determines the way we make decisions in business and in our everyday lives. It is what drives chatbots and the apps on our smartphones. It is what recommends certain websites, online stores and products to us. It is deployed in medical research, financial security, video surveillance and many more.

How Machine Learning works – five steps

1. Gathering the data

This means collecting as much relevant data as possible for the problem you want to solve. This can be from existing databases, IoT or data repositories. It is important that you focus on the right quantity and quality of data to generate optimal results.

2. Preparing the data

The better you have prepared your data, the more its efficiency can be improved. This is where the data is “cleaned up”. Real-time data, for example, can be quite disorganized or items may be missing. Once you have the data as you want it to be, you then have to split it into two datasets: the larger dataset (approx.80%) is used for training the model and the remaining data is used for evaluation purposes.

3. Training the model

This is where things get interesting: the larger dataset will be connected to an algorithm which will apply mathematical modelling such as supervised or unsupervised learning methods to learn and identify patterns in order to make predictions.

4. Testing the model

Here, the above model is tested for accuracy. If the results are not satisfactory then you move on to step 5….

5. Improving the model

To coin a phrase: “If at first you don’t succeed, try, try again!” The model will be improved as often as it takes to gain accuracy. This may involve reviewing the results, maybe adding more data, if necessary. Or perhaps you need to make adjustments to the algorithm or even choose a new one. At the same time, however, there is also a risk of “overfitting”. This occurs when changes are made to the model during an overly prolonged period. At some stage you will “accidently” get a good configuration which will, however, work sub-optimally once put into practice on new or unknown data. To avoid this, it is crucial to have as much good quality data from the start or perform a cross-validation on it, if necessary.

More interesting articles

The benefits of Machine Learning

If you have a specific problem and the solution is buried among huge volumes of data, then Machine Learning is your answer since it can process, analyze and extract patterns from this data far more quickly than any human could. Machine Learning is deployed in forecasting and planning because it enables you to build more complex algorithms which take multiple (historical) data sources into account to give you a far more accurate forecast. Machine Learning is also the driving force behind predictive analytics. This is a technique which OPTANO has successfully deployed in its OPTANO Blueprint

Algorithms are the fundamental aspect of machine learning and computer science in general since they provide a logical solution path to a particular problem, instructing the computer on how to solve it. Naturally, the more accurate the algorithm is and the more efficient it is with regard to its runtime, the better. Configuring an algorithm can take days, even weeks -a serious disadvantage to companies who need solutions to their business problems fast.

The OPTANO Algorithm Tuner considerably speeds up this process…

By applying an evolutionary machine learning process, the OPTANO Algorithm Tuner allows you to configure an existing algorithm so that it is tuned precisely to your corresponding use case or a particular type of input data you already have. The result: faster and more efficient algorithm runtimes.

OPTANO developers have deployed the Machine Learning method of supervised learning for the Tuner to improve its performance. In contrast to unsupervised learning, which operates on unlabeled input data, this method relies on input data with given target variables (i.e. the right “answer” is provided) in order to provide precise recommendations and prognoses. The OPTANO Algorithm Tuner infers those labels from data that is a by-prodcut of earlier iterations. No additional labor (neither manual, nor automated) is required.

By leveraging a special tuning algorithm called GGA (Gender-based Genetic Algorithm) and gray box optimization, sub-optimal configurations can be detected via intermediate status information at runtime. This means that as soon as the gray box predicts that a configuration is not optimal for the required use case, it will automatically stop the evaluation. To get a better idea of how this works, we can use Charles Darwin’s “survival of the fittest” analogy: The best configurations of the current generation are selected to create the configurations for the next generation. This process is also assisted by machine learning. In this case, the machine learning algorithm predicts the expected quality of different potential offspring versions that can be created by “crossing” (i.e. “combining the parameters”) two existing configurations. Only the offspring with the best expected performance will be added to the population for the next GGA iteration. In other words: only the fittest configurations will survive.

The major advantage here, of course, is that you would previously have had to wait until the algorithm had reached its completion time. In this case, the OPTANO Algorithm Tuner only focuses on promising configurations, thus saving you valuable time.

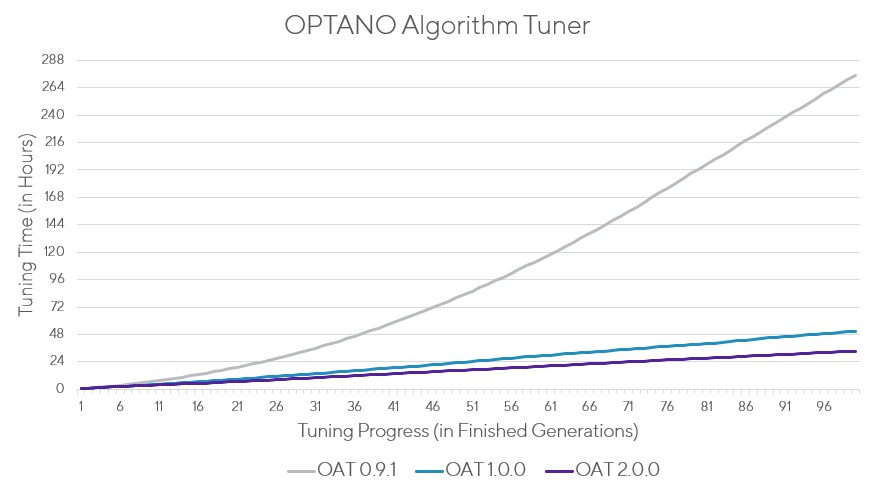

By integrating machine learning techniques in the OPTANO Algorithm Tuner, the time required for tuning could be reduced with every successive version.

Faster solutions with OPTANO and the OPTANO Algorithm Tuner

By being able to configure algorithms faster and more efficiently with the OPTANO Algorithm Tuner, businesses can find solutions to specific problems faster and thus make better decisions to optimize many areas such as scheduling, network planning, production planning, supply chain, and many more. It is also highly adaptable, meaning that it can be customized to your specific use cases.

The OPTANO Algorithm Tuner with Gray Box optimization is Open Source and available for download free of charge at NuGet oder GitHub. Why not try it today!

If you would like to learn more about OPTANO in general and how we our optimization techniques can benefit your business, feel free to contact us any time. We are happy to answer any questions you may have, send you information or demonstrate OPTANO to you personally.